Have you faced a situation when you suddenly realized that your Large project needs to be rebuilt because you ran into an unsolvable problem related to performance? When your company have a project that is popular enough and may potentially face millions of online users we need to stop and think about it.

Correctly built architecture is the key to a successful startup. It is recommended to plan the development of the architecture which will foresee a growth of amount of users for the product in the nearest years. This will help you to get a stable startup.

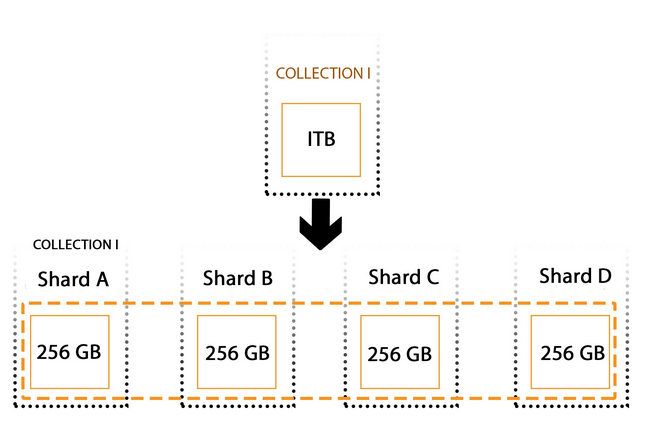

Sharding takes place here. Sharding is the process of storing data records across multiple machines. It can be done manually or by using the solutions provided in the market. Is your system based on the relational database? It is important to decide how you work with your data. How is it performed in your project? Every data query must be optimized, partitioned and cached inside your data model, knowing about its expensive points. It is recommended to perform data deletion in a deferred way but not at the moment of the operation. Taking care of caching is important as the data which is stored within the cache must be consistent and must match the real data as precise as possible. The very popular issue is when data that resides inside the cache is inconsistent. This point requires additional effort to be put for analysis of these situations and graceful handling.

Data sharding as part of your software architecture

Next most important point that requires attention is to protect the solution against hardware failures. Here it is necessary to think about replication and survival during data losses.

Nowadays many free and commercial solutions exist in the market that is intended to cope with related issues. For example, a good idea is to use such solutions like MongoDB, Cassandra and Hadoop as they are proven stable solutions.

As you will see further many solutions for high load systems are based on key-value based data. That’s because this data model covers many aspects of application systems, excepting some cases, where the relational data model is mandatory. That some cases are points where different calculations need to be performed, e.g. reporting systems.

Developers use MongoDB as one variant of the solution to improve the software architecture

The word MongoDB is made of five letters taken from the word Humongous. It is classified as a NoSQL database. MongoDB ignores the traditional relational table-based database structure. It uses JSON-like documents with dynamic schemas. The traditional relational model is too heavy and not optimal to be used for high load applications.

Cassandra as one variant of the solution to supply your startup with the information store

The Cassandra was created for fast saving, scalability and fault tolerance. It has proven itself as a stable node. It is a key-value oriented non-relational database. Special API is provided that is used to interact either with the node or with a cluster.

Hadoop as one variant of the solution to power your startup with Big Data store

Hadoop is the solution that allows working with a cluster of computing nodes. Each node carries and provides its resources for the cluster – the amount of physical storage and computing power. It provides special technology which allows to distribute the query across the cluster, run it and get the result. Optimizing the communication cost is essential.

That technology is called “map and reduce” which means that we have to map data and then reduce the data set to get the actual result at the side of the receiver.

Each Map function output is allocated to a particular reducer by the application’s partition function for sharding purposes.

The framework calls the application’s Reduce function once for each unique key in the sorted order. Reduce can iterate through the values that are associated with that key and produce zero or more outputs.

The distributed file system HDFS provided by Hadoop manages the storage of files in a cluster of Hadoop instances. The solution will process data replication and fail-over procedures. Java developers very well know how important is to choose the right solution for that purpose.

Other variants of solutions

Alternative variants may be chosen. It even can be preferred to implement own proprietary solution.

Java or .NET?

Java environment is preferred by many vendors due to compatibility between platforms and the amount of available open source solutions.

Popular highly loaded projects prefer different technical solutions, for example, .NET. If your platform is developed using .NET, it is worth considering the same approaches for solutions to similar problems.

Data Partitioning

The most bottleneck in solutions requiring top performance is the speed of reading and writing the data record on the media. The file operation must be held in the most optimal way basing on file system specifics.

When we face this topic we see two different partitioning types – vertical and horizontal partitioning.

Vertical Partitioning

Typical file systems are slower when you work with a single file, therefore, the file is being split to a number of files with fewer data. This is called vertical partitioning.

Horizontal partitioning

To use the capability of joint computing power horizontal partitioning takes place. Data can be split around different nodes of cluster basing on the above-mentioned solutions this task is called horizontal partitioning. See the diagram below.

Preparing for high loads by using horizontal partitioning

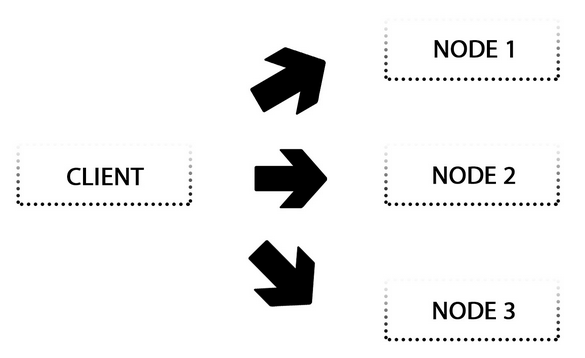

All of the data records must be grouped. These groups can be separated and located on different nodes. In such system, if data is located on different nodes we need to maintain a connection to the target node, using that we can access the node and manage that.

Stability

When we touch the topic of stability we need to speak about the replication of data between nodes. The advice here is to keep the replica on the next node for each node. Then we can find replica the easiest possible way. The geographical division of data nodes is to be considered.

Balancing

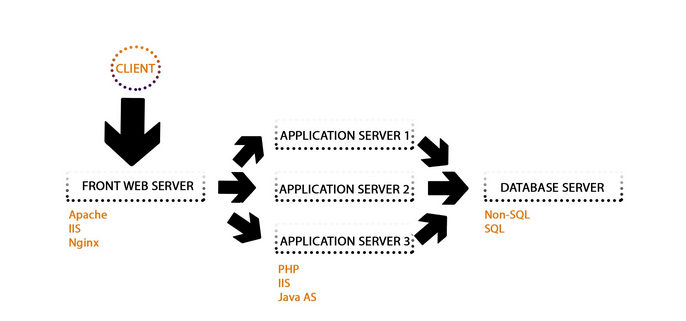

Web requests are to be forwarded from load balancing server to the target web server which is a member of the cluster. This is to be done using standard means of web servers. The most popular open source web server is Nginx.

Architecture of typical high load system

The most popular Web Servers support balancing based on Round Robin. This is the simplest scenario. Along with the Round Robin algorithm, many alternative algorithms are provided by Web Servers. This allows you to pass a request to multiple IP addresses of Application Web Servers inside the cluster. This sample architecture is shown in the next diagram.

Typical architecture of a startup ready for high loads

In this case, the request will be passed to members of the cluster. This means that the load will be distributed proportionally to all members of the cluster.

Conclusion

The best idea is to think about effectivity during the design of architecture. This will save your efforts on optimizing the critical points when you face high load on your startup. The solutions mentioned above are practically used by many vendors and are indispensable helpers. It is recommended to plan the architecture of your startup product in advance.

Additional information and articles you may find on our web site: Diatom Enterprises

All registered trademarks and copyrights are properties of their respective owners.